Why bother scanning and digitizing documents and books? What is the justification of such enormous effort to convert the cultural legacy of humans to digital form? I often hear such questions - from historians who prefer the “smell and touch” of the original documents or archivists who claim that microfilms are good enough. Is digital technology just a the fashion that will soon pass, or does it have a deeper significance?

If you think that there is something powerful in the digital, read on. We will explore why digital is important - for archives, libraries, museums (GLAM) and all the producers and consumers of cultural goods. In the next three sections we will discuss the three reasons for switching (or, perhaps, returning) to digital information processing: Preservation, Discoverability, Access and review the oldest discrete information systems known to us.

Preservation

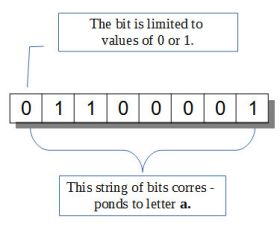

Digital is only one of many implementations of discrete information storage and processing. Most of the signals that reach our senses, be it a rainbow, a symphony or the smell of a rose, can be considered analog. Analog means that the signal can take any value, for example a tone in music or a color in visible spectrum. The range of possibilities is typically limited only by capabilities of our senses - we cannot see the infrared, nor hear the ultrasound. Once the signal enters our eye (or a digital camera), it does not retain the continuity of the original signal. In the eye retina the sensors - rods and cones - act on the ‘all or nothing’ principle, in the camera the pixel sensors decompose the light into a small number of levels. In the discrete system only a limited, countable number of states is allowed, with nothing in between. All modern digital computers use the basic information unit of a binary bit, which can only have two states (commonly called 0 and 1). The mathematical theory of information, first proposed by Claude Shannon, also uses the binary bit with two possible states, implying that information is in its nature discrete. In computers, single bits are typically strung together: a group of 8 bits in sequence is called byte. In order to keep the generality of the discussion, we will call the smallest unit a character, and a string of characters a word. The digital computers thus operate with 2-state character and 8 character word.

Discrete information systems

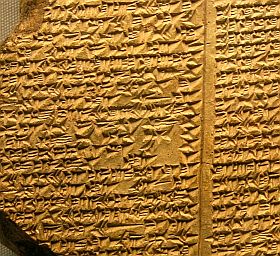

First computers were built during WWII, digital computing thus celebrates the 75-th anniversary this year. However, computers are not the first to process information using discrete states. Humans have developed many such systems throughout history, from smoke signals (two-state character, smoke or no-smoke) to Morse code (six-state character). The biggest human invention of discrete information storage is, however, the alphabet. It is generally agreed that the earliest alphabet was invented by Sumerians about 5200 years ago. The language is of course much older, between 2 million and 200,000 years old.

The number of different characters depends on the language, from some 26 in the Latin alphabet to thousands in the Chinese. It could not be otherwise, as the alphabets were invented at least several times independently. Let us take the Latin alphabet and try to estimate the number of states the character can take. We need to count all the different glyphs that can occupy a slot for a single character. There are 26 letters - lowercase, plus another 26 uppercase; there are 10 digits, assorted symbols like $, &, §, space, punctuation, etc. All told, the basic Latin character has some 120 to 150 states. The length of the word is variable, but in the common language rarely exceeds 20 characters. There is no upper limit for the length of a word, as newly invented technical naming conventions, for example in chemistry, allow one to string as many characters as needed to form a name.

Lossless copying

The invention of alphabet, a discrete representation of language, permitted storage, copying and communicating of information on a scale not possible before with verbal communication. Lossless copying is the most profound consequence of this invention. Analog signals quickly degrade when they are copied, as the game of Chinese whispers (the telephone game in the US) amply illustrates. The spread of rumors in a village, or multiple copying of a magnetic tape show the same effect. However, with a limited number of states, the character strings can be copied exactly (barring human errors).

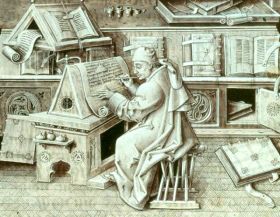

The media on which the texts were recorded can sometimes last hundreds or thousands of years. Fragments of the Sumerian clay tablets or Egyptian papyrus documents have been discovered. In general, however, relying on the medium is not wise. A fire can destroy a library or archive, be it the the Library of Alexandria two thousand year ago or the Ottoman archives in Sarajevo on February 7, 2014. The Epic of Gilgamesh, one of the earliest surviving works of literature, can be read today only because it has been copied many times. The practice of copying the text by monks and later by professional scribes flourished in 13 and 14 century (it has a name: Manuscript Culture) and contributed to the survival of many ancient texts. The copying was careful, with reviewers and quality check, but in practice, inevitable errors crept in. The number of errors is, however, many orders of magnitude smaller than in analog copying.

Machine readablity

Another aspect of the discrete written texts is machine readability. Writing is in its essence discrete, consisting of separate letters forming words (and then sentences, paragraphs etc.). However, up until recently letters and words were stored as marks on a medium (be it stone, clay or paper) - readable to humans who possess the language, but not to computers. There are techniques, such as ORC (Optical Character Recognition) that can -- although with mixed success -- automatically convert printed text into machine readable form, but in general, this process still requires human work. Once converted into a machine readable form, the computers can do all the wonderful things they do with information in general: create indexes, categorize, translate (still in infancy, but constantly improving), transform and more.

Other works of human culture, such as paintings or sound, are analog in nature, and are therefore more difficult to make machine readable. It is partially possible in case of sound, by individually encoding the tone, loudness and duration of each component of a complex sound. Metadata that contain information about the object are also typically machine readable.

Error correction

The alphabet is an old invention, but it is not the oldest discrete information system known to humans. To locate the origins of the oldest, we have to travel back some 3.5 billion years. To put this number in perspective, the age of Earth is estimated to be 4.5 billion years, and the age of the universe we live in to 13.8 billion years. The oldest information system has a 4 state character, and 3 character word, and stays unchanged for some 3.5 billion years due to lossless copying and error correction. It is called the genetic code. The four states of the character are actually 4 different chemical compounds, conveniently named A,C,G,T. The word is a string of 3 such letters and it codes one of some 20 or so bigger molecules, called amino acids. Amino acids are strung together to form proteins, of which there is an uncountable variety - 10 million or more.

The chemical carrier of the genetic code, DNA, is not very durable as a medium. It survives in living cells, being copied and recopied many times, but not much longer after death. The information that it contains is, however, extremely tenacious. Random errors that naturally occur in the living organisms, are repaired by a set of complex mechanisms. Cellular DNA repair system results in error rate of one per billion or ten billion. The natural selection acts as the the next layer of error checking. The few errors that escape those mechanisms obviously do creep in, or we would not be here to read this blog, but they are rare and far between - no computer error checking is yet close to matching this system. The information endures, because it is copied, very faithfully, from generation to generation.

Error correction and verification is also a feature of modern digital computers. They are built into operating systems, exist as so called “hash codes” that can assist error-checking of a file transported over uncertain transmission channels. All those mechanisms are actively employed in copying information.

Preservation

We cannot count on the durability of media such as paper, film or clay slabs for long term preservation. The lessons from our own culture and from biology are unequivocal - only copying information, creating multiple copies with the best error correction, can preserve the legacy of human culture for generations to come.

Discoverability

Working in an archive, one very frequently encounters queries like this “My grandfather took part in the battle of (…). Tell me please, what happened to him next?”. Each time we try to explain that the information may be somewhere in the 1.5 million pages of documents in our archive. In the short time that Internet is with us, people have learned to rely on Google or Wikipedia to find anything. In fact the Internet already acts as our ‘external memory’, searchable faster than the one in our heads. Discoverability is aided by increasing supply of metadata as well as by general-purpose search engines.

What was in the era of paper books called “Indexing” now acquired a new name, “Metadata”. Organizations that house cultural resources (GLAM) increasingly make the metadata available and searchable on the Internet, whether the resource itself is freely available or not. People may still have to come to the library to borrow a book, but at least can quickly find the single piece of information they need.

There are two trends, both promising much better search results in the future. One is improvement in natural language processing, which helps Google and other search engines better understand both our questions and the answers buried in complex sentences. The internet search already goes beyond simple keywords, giving you better answers to questions in plain English (searching in plain Polish and other languages doesn’t work nearly as well), and one can expect growing sophistication of such tools. The other trend is the growing supply of structured information, or metadata. I have written before about Linked Data -- a form of metadata -- in which information is labeled as are relationships between objects. The more Linked Data the internet has, the better able it will be to provide answers to much more complex questions, and the more closely it will resemble real intelligence. As long as the information is in (or is converted to) digital form, we will be able to find it.

Access

The 20th century model of access to cultural resources changed drastically in the past few decades. Before the Internet, there were books, bookstores and libraries. If you were a collector, you would build your own library; if you could not afford it or had no place for it, you could read books in a library - usually open to the public and plentiful. Art, historical or archaeological artifact were displayed in museums (or stored in museum vaults). Only the most popular books were widely read, with the older ones going ‘out of print’ and disappearing. In the 21 century, due to a collision of new digital technology and the 20 century copyright law, you could still buy a paper book, but the options for digital version of the book are severely limited. You could not buy an e-book in the same way you can purchase a printed copy - you only obtain a license with strict limitations. The rental of electronic books is in its infancy, and the libraries have a very limited choice of e-books. Emergence of the Internet changed the landscape dramatically. Old books, old movies and art are much more accessible, and have undergone a renaissance. Today, there is a segment of human culture that is freely accessible (fortunately it is growing) and a segment that has remained blocked due to copyright restrictions, and which ultimately may be lost. The new generation relies almost exclusively on the internet for the supply of information and for access to the cultural goods.

When a resource is “put on the Internet”, it becomes accessible from any place of the globe. The increase in accessibility is staggering. Google has some 500 million searches a day, Wikipedia is accessed millions times daily and each time the user makes use of some of the found information. The small section of the Pilsudski Institute historical archive that was digitized and is openly accessible online enjoys about 200 fold increase in number of visitors - about 40 thousand a year. The increase is not only in absolute number, but also in geographical spread. With no need to travel, the information requests to the Institute online archives come from some 2,500 distinct geographical locations over the whole world. This is possible because the resources are digitized, indexed, and freely accessible. The future of access to text, images, music, moving images and all other new inventions of human culture is digital.

Read more:

- Manuscript Culture - Wikipedia article on the Manuscript Colture in Middle ages.

- Naprawa DNA - article on the cellular DNA repair mechanisms.

- Search Engines Change How Memory Works - article in Wired.

- Google Search scratches its brain 500 million times a day - article in CNET.

- Paper Rules: Why Borrowing an e-book from your library is so difficult - article in Digital Trends.

- Semantic Search - Wikipedia article on searches using natural language sentences.

Marek Zieliński, April 4, 2014

Explore more blog items: