- Marek Zielinski

Digital photo albums to survive a generation or more. Part 1

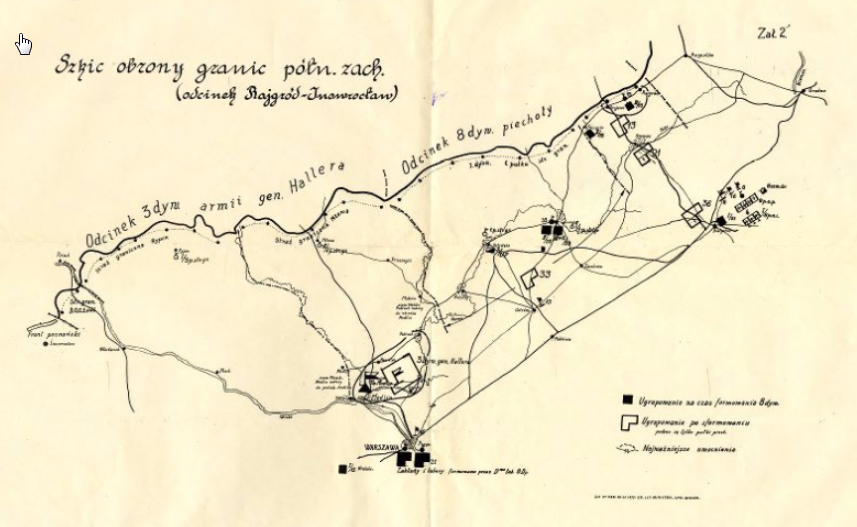

A photo from Władysława’s album with her father among members of the organization “Sokół”, in Silna, Poland, early 1920s.

Introduction

My wife’s aunt Władysława always kept the family albums ready to be packed in a backpack, so that during WWII, when the family were expelled from their flat in Łódź and during further forced migration, the albums always traveled with her. This way the albums survived and we can now enjoy the family photos of several generations back. The durability of black and white photography and of the good quality, rag based non-acidic paper on which they were printed helped preserve the hundred-year old prints.

Color photos from 20 or 30 years ago did not fare as well. Organic dyes fade quickly, and some lost most of their colors. We are working on digitizing them, trying to digitally restore the colors, converting them to digital albums.

Creation of digital copies of the old albums has also another goal, in addition to preservation. The family was scattered around the world. Brothers and sisters who lived or worked in different partitioned regions of Poland before 1918 ended up in different countries; some returned to the reborn Poland, some settled in Germany, France, in the US, UK and elsewhere. A single album is not enough, but an online version can be viewed by many.

Which brings us to the question: how to create a durable, long lasting electronic album?

About 15 years ago, we installed Gallery2, an Open Source, Web-based photo gallery, which had everything that was necessary to build a collection of online albums. We used a desktop progm Picasa to organize and annotate the images. Now, Gallery2 and Picasa are no more (or not maintained, which is almost the same). This article is about our effort to rebuild the albums, so that they can survive at least for one generation (or, say, 25 years).

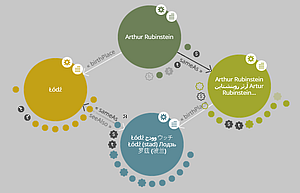

How can we predict the future, even just for 25 years, in the fast changing landscape of digital world? One factor that will help (and guide us) is a strong conservatism of programmers or builders of computerized systems. Let us take for example Unicode, an universal alphabet or mechanism to represent characters in almost all writing systems in use today. We will stress it later, because without Unicode one cannot really annotate pictures, for example photo report about a travel from Łódź to Kraków to Košice to Hajdúböszörmény to Škofja Loka to Zürich to Besançon to Logroño. Unicode is about 25 years old, but was not the first, the Latin alphabet ASCII dominated computing for decades before. Today many programs and systems, even built quite recently, still do not support unicode. Similar conservatism affects formats for recording photographs (raster graphics). Some formats, like TIFF and JPEG, which were introduced 30 years ago, being first useful format became very popular. New standards like JP2, which are better in some respects, have a very difficult time to be accepted.

Goals of the project

It may be helpful to state the goals of the project. We start with a collection of photographs in digital form, which may be scanned photos or born-digital images. The images are organized into albums, perhaps matching original bound albums, or created from scratch. We also have metadata: descriptions, persons and places, dates, etc. associated with the individual images, and with the whole albums. Metadata may also include provenance, history and other events associated with them.

The goal of the project is to research and document the methodology to preserve the images, their organization into albums, and all the associated metadata. We are looking forward to preserve it for future generations rather than for a fleeting, one time viewing, which can be easily accomplished using social media today

Beyond the scope of this project is the preservation of bound albums and original photographs, scanning and storage of digital copies. Those topics have more or less extensive literature although some specific topics may be presented in this blog later.